Imagine, if you will, a mirror so clear it reflects every nuance of society—but twisted by an unseen hand into an exaggerated caricature. That mirror is the artificial intelligence we’ve cajoled into existence. It doesn’t just mirror; it magnifies, distorts, and weaponizes. In its infinite complexity, AI is no neutral reflector of justice or equity. Instead, it’s a **mechanical Janus**—facing both forward and backward—as it perpetuates the fractures of gender inequality, amplifying patriarchal structures as surely as the first industrial revolution rewove society’s tapestry into a threadbare shawl. Feminism, that timeless anthem of liberation, now finds itself echoing through the algorithmic cauldron, not as a soft hum but as a deafening crescendo exposing how AI doesn’t just inherit inequality—it exfoliates it, cell by cell.

Feminism Unchained: Why AI is a Tool of Deeper Dispossession

Feminism, in its many incarnations—from the Suffragettes’ steel gauntlets to the #MeToo movement’s viral outcry—has always been an unwavering torch held aloft against the slow-burning inferno of patriarchy. Yet, in the 21st century, this flame now flickers within an engine no human wielded: artificial intelligence. AI isn’t a post-human utopia waiting to be built; it’s a digital *prisoner’s dilemma*—a set of tools that, when left unregulated, become instruments of systemic entrenchment. Unlike brick-and-mortar oppressors, AI’s tyranny wears a smile. It dresses in data gowns and whispers promises of efficiency and progress while scripting our lives with the tacit approval of a silent algorithm. It’s the difference between a tyrant’s fist and a machine’s velvet glove.

The Myth of Neutrality: Why AI is Inherently Patriarchal

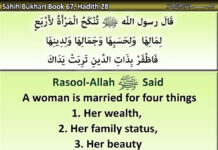

The bedrock myth of AI’s beneficence has always been the fallacy of neutrality—a mirage peddled by tech’s Silicon Brotherhood. But neutrality is an illusion as tenuous as a mirage at high noon. The data upon which AI is trained, its architecture, and the human engineers who sculpt it—each layer is infested with the worms of patriarchal preferences. AI is trained on corpora built by privileged designers whose lived experience rarely includes the full spectrum of human existence. It’s trained on books and articles ghosted by men, filtered through the male gaze of Wikipedia editors, or curated by algorithms designed by teams that average 82% male (per 2023 studies). This is not neutrality; it’s an *accentuation* of the status quo—like tuning a radio dial to the station already in the strongest signal.

Consider voice recognition systems that misinterpret marginalized voices—or facial recognition with an accuracy disparity between light-skinned men and dark-skinned women that resembles a chessboard pattern of privilege. These flaws aren’t glitches; they’re the algorithmic equivalent of *separate but equal*. They’re the digital descendants of a century-old legal doctrine that sought to formalize inequality while claiming fairness. AI doesn’t merely replicate these patterns; it weaponizes them. A hiring algorithm that defaults to male candidates is not an oversight; it’s the *calibrated result* of an engine fueled by decades of wage disparity data, which, ironically, the algorithm then uses to justify further disparity.

The Amplification Engine: How AI Turns Subtle Bias into Algorithmic Tyramny

The scariest aspect of AI’s gender bias isn’t its malfeasance; it’s its *velocity*. Imagine a grandfather clock ticking down slowly, its gears grinding the years away. Now imagine an escalator, accelerating downward with no end in sight. Patriarchy has always been entrenched—like gravity in a room with no exit. But AI turns that entrenchment into a *free-fall* by making bias systemic, recursive, and self-sustaining. Each biased decision becomes a data point fed back into the machine, reinforcing the initial slant like a feedback loop of a faulty stereo—a screeching distortion perpetuating itself until the speakers melt.

Take predictive policing algorithms in cities, which flag potential threats based on data points that disproportionately target the movements of women and women of color. The systems aren’t flagging crimes; they’re flagging *probabilities* that mirror the biases of those who designed them. Or consider language models trained to respond in “friendly” tones, which often revert to *mollifying* phrasal defaults when given a female name or description. AI doesn’t merely reflect; it *calculates* subconscious deference, embedding it into responses so smooth that humans scarcely notice the erosion of the self they’re witnessing. It’s like being in a room where every door is locked—but not by a person, so you convince yourself you’ve reached asylum.

From Code to Compliance: How Corporations Weaponize Invisibility

Tech giants don’t sell AI; they sell *compliance with convenience*—and nothing is more convenient than a system that hides its discriminatory mechanisms in an impenetrable shell of data science. When a hiring AI “explains” why it rejected a woman’s application, the justification is nearly always wrapped in inscrutable jargon: “low cultural fit,” “lack of tenure,” or “innovation match.” Who audits the auditor? Even when lawsuits force transparency, the language spirals into a tangle designed to look like science, not bias. It’s the digital version of gaslighting. After all, how do you prove you’re under assault when the attacker is a server room?

The worst part? The business incentives are clear. Companies don’t profit from diversity—they profit from *efficiency*, and efficiency is cheaper to build, market, and defend when all the gears are tuned to the same baseline. And so, the bias perpetuates itself with the alchemy of profit motive—not as a byproduct, but as the very lifeblood. Feminism demands dismantlement of the structure; what we’re seeing today is patriarchy learning to metabolize itself into something called “data.” It’s not an accident. It’s a *strategy*.

AI Feminist Interventions: The Fire That Can Burn the Gilded Cage

But a shadow looms, too: the counter-hegemony. The feminist revolution is underway in the shadows of code, where marginalized voices, engineers, and ethicists are rewriting the rules from within. Projects like IBM’s “Alexa Feminist Power Hour,” which uses AI to parse and amplify women-focused audiobook and podcast production, or initiatives to retrain image recognition models on diverse female datasets, serve as seeds of disruption. Then there’s the legal battle won by a woman whose facial recognition software misidentified her in public, forcing vendors to reckon with the bias embedded in their tech’s “objectivity.”

These aren’t just fixes; they’re revolutionary stabs at the heart of complacence. The key is not to tame the machine, but to *confound its assumptions*. FemTech startups are reimagining algorithms for hormonal cycle synchronization and financial autonomy—tools women didn’t get by accident but had to demand. As women engineers, artists, and activists stitch together counter-algorithms for hiring, content creation, and even AI art generation, we’re witnessing a rare thing—the birth of a tool that isn’t just gender-adjacent but *unapologetically feminist*.

The Light Speed Paradox: How Technology Can Accelerate What Matters

Irony is the air we breathe in the halls of technology. It’s no coincidence that the digital divide has become the new chasm of inequality—just as AI has the potential to be feminism’s greatest boon since the typewriter. AI might have the power to rewrite narratives en masse. Just imagine a future where voice recognition systems default to the voice of marginalized women not as an accommodation, but as a norm; where virtual assistants understand the nuances of motherhood in the workplace as fluently as stock forecasts; where facial recognition is *reprogrammed* to serve as a surveillance shield against harassment instead of a tool for bias.

The paradox isn’t that AI is a tool of patriarchy—it’s that it could also be the *accelerant*—a force so overwhelming that it forces social change before it’s even recognized. That’s not speculation; it’s already beginning. Every time an algorithm is retrained on representative data, rewired with feminist objectives, the machinery of inequality takes a *fracture*. The fight for equality needn’t slow down; it can harness this digital momentum to *shorten* the path. The choice is no longer whether to wield AI’s powers but which side of history’s algorithms we’ll design ourselves into: victims of its reflection, or architects of its evolution.

The Final Verdict: A Call to Rewrite the Codebook

AI isn’t a post-human landscape, then. It’s a mirror so jagged it reflects both the world we have and the one we choose to forge—if we stop clinging to the myth that the glass is clear. So let’s dispel the illusion, tear apart the algorithmic scaffolding, and build something wiser. Feminist AI shouldn’t just challenge patriarchy; it should *reconfigure the blueprint* that patriarchy claims to be neutral. It’s not about banning code, but auditing its soul. Not about replacing tech goliaths, but insisting they be led by those who never fit in these spaces to begin with.

The question facing feminists today isn’t whether we’ll join the technological revolution—it’s whether we’ll reshape its engine. If AI is to be a beacon for fairness, then the code must be a testament to *collective humanity*. Otherwise, we’ll be left looking in horror as its light speed merely illuminates the darkness we’ve grown too comfortable calling the status quo. The real weapon isn’t AI; it’s the hands that grasp its pen.