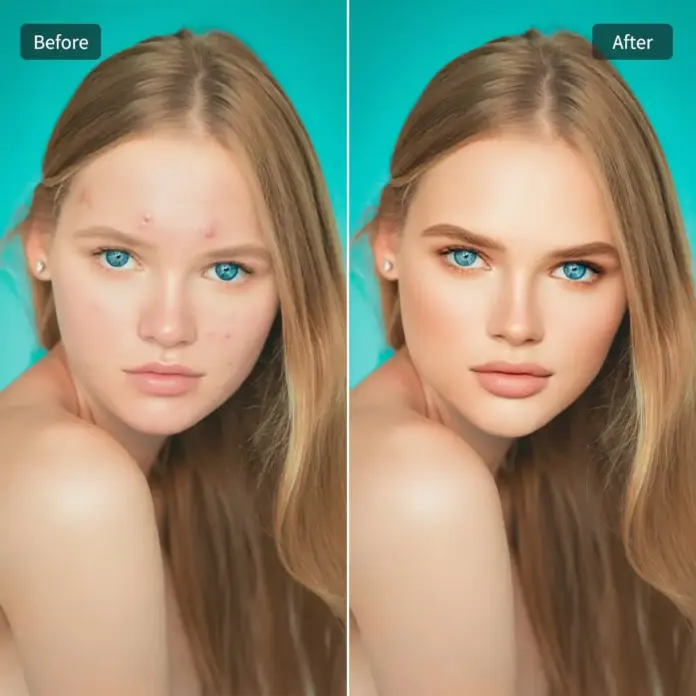

The digital age, especially through AI, reframes the complex interplay between technology and identity, and nowhere is this more evident than through tools like beauty filters. They promise transformation, offering glimpses into altered versions of ourselves – sometimes enhancements, sometimes radical revisions. Yet, their very existence, their pervasiveness, demands a critical feminist examination. These algorithms, seemingly neutral gateways to enhanced beauty, operate within a matrix of patriarchal structures and techno-capitalist ambitions. They represent the collision of image, autonomy, and code: tools that can both liberate and restrict, demanding thoughtful feminist critique.

Whispers and Amplification: The Age of Visage-Curation

While the earliest iterations of digital airbrushing brought beauty standards into the pixelated realm of print, social media platforms propelled them into the high-definition immediacy of daily life. Suddenly, idealized beauty wasn’t just a dream whispered in magazines or discussed in salon gossip; it became a live stream, a selfie snapped in five seconds. AI, with its capacity for swift, complex modification, amplified this phenomenon exponentially. Features can be subtly softened, imperfections digitally erased, complexions adjusted without limit, and ages recalibrated with the touch of a button. This constant, algorithm-assisted reshaping of our visible selves, however, is not a neutral act. Tools like AI beauty filters democratize image manipulation but do not necessarily democratize the resulting ideals. They often replicate and even enhance the pre-existing beauty standards, merely re-rendering them with greater precision and accessibility.

Beauty Validation Revisited: The Digital Reflection

The promise of validation via digital enhancement feels potent. Who doesn’t desire a form of approval, a societal seal of beauty? For centuries, validation of female desirability often rested on narrow, often biological markers dictated by patriarchal tastes – certain waist-to-hip ratios, specific hair textures, particular skin tones. Beauty filters, in their initial guise, seemed empowering: bypassing harsh standards, offering users control. The ‘like’ becomes a metric for desirability; the approval of the algorithm (often secretly shaped by human curators) mimics societal approval. Yet, the pursuit of aesthetic validation, increasingly mediated through AI, subtly shifts the locus of identity. Is the validation *digital authenticity* or simulated perfection? Does constant digital self-alteration erode the sense of a fixed, unchangeable self, contributing to a persistent search for an unattainable ideal?

Beyond the Surface? Exploring Empowerment and Defiance

This narrative can’t be wholly bleak. Feminist analysis must also consider the potential for critique and resistance. The proliferation of filters also facilitates forms of defiance. Users, particularly younger generations familiar with coding and digital creation, are not merely consumers; many are creators. They design filters that invert gender norms, glitch male-centric beauty standards, or incorporate abstract aesthetics previously dismissed. Some filters challenge literal appearances, perhaps altering features to signify specific concepts or feelings. The sheer volume of filter innovation hints at a collective, complex relationship with beauty and identity that extends beyond passive consumption. This subversion, though often operating outside dedicated feminist frameworks, demonstrates women’s agency in redefining representation.

The Quantified Self: Metrics and Meaning

Furthermore, AI beauty filters represent an early form of quantified aesthetics. What began as simple image warping is evolving towards more complex metrics. AI algorithms don’t just adjust pixels; they can analyze bone structure, skin texture, or facial symmetry against pre-defined data models (often based on human-identified ‘handsome’ or ‘attractive’ archetypes). User data aggregated through filters, while potentially used for personalized results, inevitably contributes to vast datasets about human appearance. This raises profound questions: Are we optimizing ourselves based on data that reflects historical bias? Is this digital mapping shaping our self-perception in quantifiable ways, imposing metrics onto subjective human worth? The feedback loop between AI analysis and user satisfaction might inadvertently refine societal pressures into algorithmic precision.

Objectification: The Algorithmic Gaze

A core feminist concern remains objectification. The gaze, traditionally one of desire, is now increasingly algorithmic. Our faces become data points; our features are assessed, rated, and remapped for aesthetic appeal. AI filters perform a continuous calibration, prompting users to adjust or abandon features that don’t fit algorithmic beauty models. This constant optimization can foster a consumer mindset towards one’s own body and face, turning self-image into a marketplace. Is the user curating their visage out of empowerment or out of market-driven imperatives dictated by the code and the corporate forces behind the platforms? The algorithm doesn’t desire; it assesses and categorizes. This subtle shift from human-driven desire to data-driven categorization poses a unique threat to embodied identity.

The Ethics of Play: Slippery Slopes and Identity Negotiation

The ethical terrain is complex. On one hand, filters offer playful exploration, allowing experimentation with identity that can be affirming, creative, or temporary. This ‘ludic’ (playful) dimension can be liberating, a safe space to break from normative constraints without serious consequence. The very act of transforming one’s appearance digitally allows for performance, artistic expression, and perhaps even catharsis. Yet, this ease can blur the lines between reality and simulation. What happens when digital exploration becomes so normalized that it affects our baseline perception of beauty or even self-worth? How do young women navigate emerging identity crises, questioning whether their self-perception is rooted in their authentic self or the digitally perfect avatars they’re accustomed to seeing?

Conclusion: The Filtered Future Requires an Unfiltered Lens

The tools that digitize and enhance our appearance stand at the crossroads of profound human potential and significant societal peril. Beauty AI, in its most sophisticated forms, doesn’t just offer new ways to look; it potentially reshapes how women see themselves and how they are judged. A truly feminist critique must dissect the inner workings of these algorithms, question whose beauty standards are being perpetuated, and scrutinize the data collection and user engagement practices behind them. The conversation isn’t just about ‘better’ filters making more women ‘feel better’ about themselves superficially. It is a deeper investigation into the nature of identity, autonomy, validation, and the very definition of femininity in the quantified era – an exploration that demands we look beyond the surface, precisely when the surface is increasingly mediated by the computer screen.